When to Use Each Telemetry Signal: Logs, Traces, and Metrics

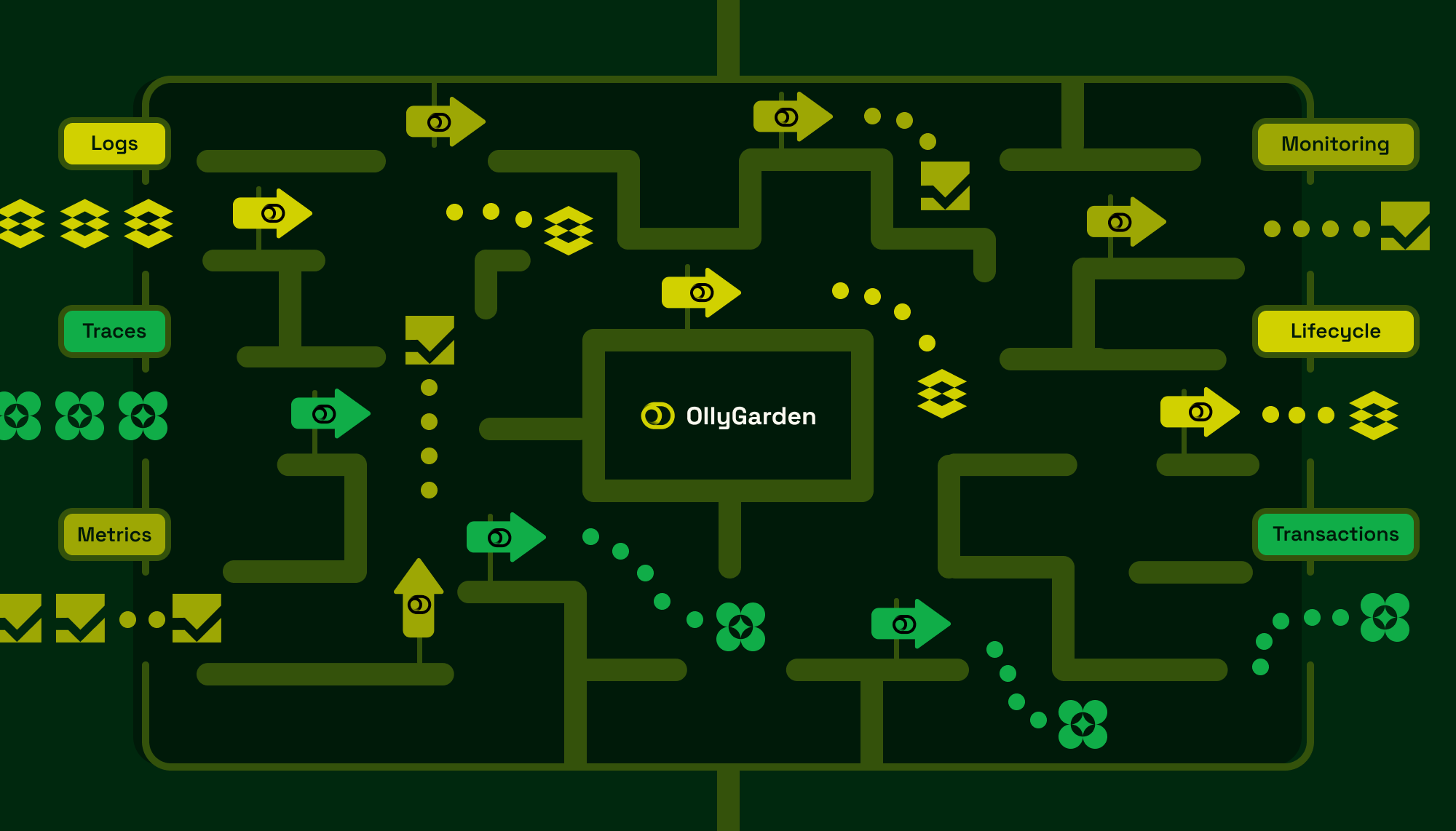

Understanding when to use logs, traces, or metrics is fundamental to building effective observability. Each signal serves a distinct purpose, and choosing the right one for a given situation directly impacts your ability to debug, monitor, and understand your systems. The challenge is that these signals overlap in capability, leading teams to either over-instrument with redundant data or miss critical insights by using the wrong signal for the job.

Logs: The system lifecycle narrator

Logs are the grandfather of all telemetry signals. Most of us learned to read logs and error messages early in our computing journey, first as users trying to understand why something failed, and eventually as developers writing log statements to communicate system state. Because of this history, logs remain the most universal and accessible signal.

Most legacy systems rely exclusively on logs. Before modern observability practices, aggregating log records was the standard approach to understanding request rates, queue depths, or user journeys across systems. Many teams implemented correlation IDs to tie logs for a specific request across multiple services, essentially building a primitive form of distributed tracing before dedicated tracing systems existed.

Today, logs serve a more focused purpose: understanding the lifecycle of an application. They excel at recording when a polled connection to a database was established, when a ring was rebalanced, when a circuit breaker opened or closed, when a critical resource stopped working, or when a service recovered from degraded mode. These are events about the application itself, not about individual business transactions.

The strength of logs lies in their flexibility and low barrier to entry. Any developer can add a log statement. The weakness is that this flexibility often leads to inconsistent structure, making analysis difficult at scale.

Traces: The transaction investigator

Traces capture telemetry for business transactions, typically in the context of an end-user request that might touch dozens or hundreds of services. Unlike logs, which describe system state, traces describe what happened during a specific operation and how long each step took.

Think of spans, the building blocks of traces, as super-logs. A span is essentially a log entry with a timestamp, a duration, causality relationships through parent span references, and built-in correlation IDs through the trace ID. The critical addition is context propagation: a standardized mechanism to pass trace and span IDs to downstream services, ensuring that all participants in a transaction can contribute to the same trace. This is what those hand-rolled correlation ID solutions were trying to achieve, but traces provide it as a first-class capability with standard protocols and automatic propagation.

The power of traces becomes evident when debugging errors. A trace shows not just that an error occurred, but the exact path the request took, which services were involved, and where the failure originated. When aggregated across many transactions, traces reveal user behavior patterns, system bottlenecks, and optimization opportunities.

One pattern that traces expose with unusual clarity is N+1 queries. While this anti-pattern is difficult to spot with other signals, a trace immediately reveals when a single request triggers dozens of sequential database or network calls. The visual representation of span timing makes the problem obvious in a way that logs or metrics cannot match.

Spans carry highly detailed attributes: which feature flags were active, the user's IP address, whether this is a VIP customer, which authentication mechanism was used, which payment method was selected, and which specific service instances processed the request. This level of detail makes traces the backbone of observability. When you have questions or theories about service behavior, traces often provide the answers.

This power comes with trade-offs. The detail that makes traces valuable also makes them expensive. The sheer volume of spans in a complex system creates significant storage and processing costs. Traces are also the most difficult signal to learn and implement correctly, which drives teams toward auto-instrumentation. While convenient, auto-instrumentation often increases volume further without adding proportional value.

Metrics: The pre-calculated answer engine

Metrics are aggregations of events or numeric representations of system state. They answer questions like: what is the current queue depth? How many users visited this page? What is the p99 latency for a specific endpoint?

As aggregations, metrics require choosing dimensions upfront. You might aggregate by endpoint path, service location, or page visited. You typically do not store the IP address for each individual user visit unless you specifically need to count visits per IP. Time-series databases, the systems specialized for storing metrics, are optimized for aggregated data rather than high-cardinality dimensions.

Metrics excel at pre-calculating answers to questions you know you will ask. RED metrics (requests, errors, duration) for HTTP services are the classic example. If you know you will want to track request rates, error percentages, and latency distributions for every endpoint, metrics provide this efficiently and at low query cost.

The limitation appears during ad-hoc exploration. While metrics can answer many questions, there will inevitably be investigations where you need a dimension you did not anticipate. Am I seeing high latency for all users, or only those in Europe? Only Germany? Only Berlin? If you did not include geographic dimensions in your metrics, you cannot answer these questions without re-instrumenting.

Metrics are the classic signal in monitoring. When operating a database, experienced operators know which metrics to watch: connection pool utilization, query latency distributions, replication lag. These indicators quickly reveal the health of the system without requiring investigation into individual transactions.

Choosing the right signal

The decision framework is straightforward once you understand each signal's purpose.

Use traces to record events related to business transactions. When an HTTP request arrives, when a user places an order, when a payment is processed, these are trace-worthy operations. The value is in understanding the complete path and timing of individual transactions.

Use metrics to pre-calculate answers to questions you know you will ask. If you need to monitor request rates, error percentages, or latency distributions, define those metrics upfront. The value is in fast, cheap access to known indicators.

Use logs to understand lifecycle events of your services. When dependencies change state, when configuration reloads, when the application starts or stops gracefully, these belong in logs. The value is in understanding the application as a running system, not the transactions it processes.

When signals overlap

Real systems often require multiple signals for the same event. A database connection failure might warrant a log (lifecycle event: dependency unavailable), affect a metric (connection error count), and appear in traces (failed span for database operations). This overlap is expected and appropriate.

The mistake is using one signal where another would be more effective. Aggregating log records to compute request rates works, but metrics do this more efficiently. Searching traces to understand when a service entered degraded mode works, but logs make this pattern explicit. Understanding why a specific request failed from metrics alone is nearly impossible, while a trace makes the answer visible.

Match the signal to the question. System health and known indicators call for metrics. Transaction debugging and behavior analysis call for traces. Application lifecycle and operational events call for logs.

Summary

Each telemetry signal has a distinct purpose that reflects its design and history. Logs narrate system lifecycle events: startups, configuration changes, dependency state transitions. Traces capture business transaction details: request paths, timing, errors, and the attributes that explain behavior. Metrics pre-calculate answers to monitoring questions: rates, distributions, and aggregate states.

Effective observability uses all three signals appropriately. The goal is not coverage through redundancy, but precision through choosing the right tool for each question you need to answer.